Static 3D Gaussian Splatting captures a frozen moment — a building, a streetscape, an interior — as millions of overlapping Gaussian ellipsoids that render in real time. But the real world moves. People walk through spaces, vehicles pass through intersections, trees sway in wind, and construction sites change daily. 4D Gaussian Splatting (4DGS) extends the core 3DGS framework into the time domain, enabling photorealistic reconstruction of dynamic scenes from multi-view video footage.

If you work in 3D scanning, film production, autonomous vehicle development, or any field that deals with moving environments, 4DGS represents a significant shift in what is possible with real-time 3D reconstruction. This guide explains what 4DGS is, how it works under the hood, where it is being applied commercially, and what it means for professional scanning services.

What Is 4D Gaussian Splatting?

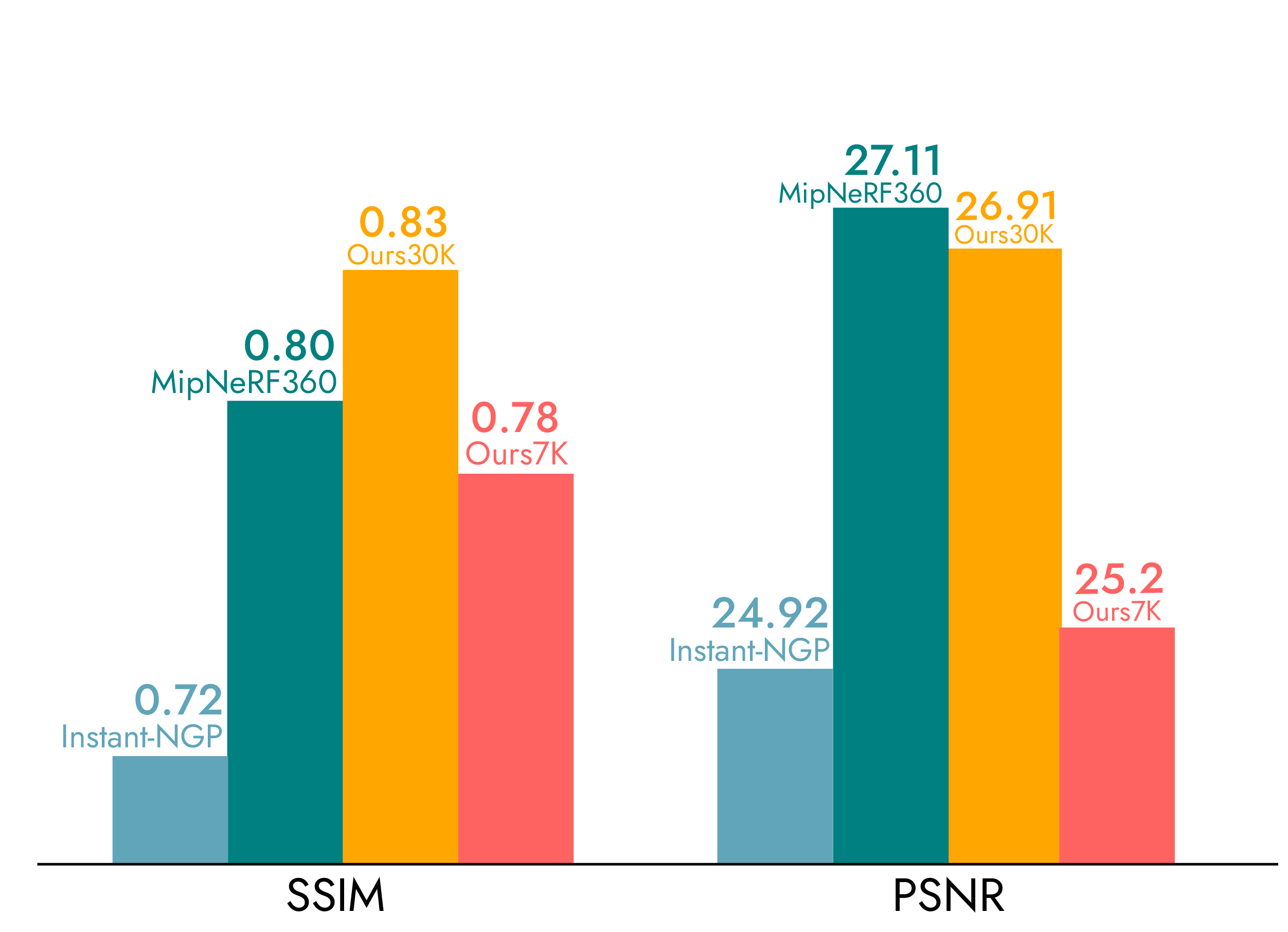

Standard 3D Gaussian Splatting (3DGS), introduced by Kerbl et al. at SIGGRAPH 2023, represents a 3D scene as a collection of anisotropic Gaussian primitives. Each Gaussian has a position, covariance matrix (defining its shape and orientation), color (represented via spherical harmonics), and opacity. A differentiable rasterizer projects these Gaussians onto the image plane for rendering at 100+ FPS — far faster than Neural Radiance Fields (NeRF) and visually superior to traditional mesh photogrammetry for many use cases.

4D Gaussian Splatting adds a temporal dimension to this representation. Instead of modeling a single static moment, 4DGS reconstructs how a scene changes over time. The fundamental challenge is: how do you represent millions of Gaussians that move, deform, appear, and disappear across a video sequence — while maintaining real-time rendering and visual quality?

The answer, depending on the research approach, involves one of several strategies:

-

Deformation fields — A neural network learns a mapping from a canonical (rest) space to each time step. Every Gaussian has a fixed position in canonical space, and the deformation field warps it to the correct position at time t. This is memory-efficient because you store one set of Gaussians plus a compact deformation model.

-

Time-conditioned Gaussian parameters — Each Gaussian’s properties (position, rotation, scale, color) are modeled as functions of time. Disentangled 4DGS separates time-dependent properties like velocity and time-varying scale from static properties, reducing redundant computation while preserving dynamic details like fluid spray and glass reflections.

-

Explicit 4D Gaussians — Rather than warping 3D Gaussians through time, some methods represent primitives directly in 4D spacetime (x, y, z, t). This allows the renderer to slice the 4D volume at any time coordinate to produce a 3D frame.

-

Hybrid approaches — Methods like MEGA (Memory-Efficient 4D Gaussian Splatting) combine entropy-constrained Gaussian deformation with temporal-viewpoint aware color prediction, achieving approximately 8× compression compared to naive 4D Gaussian storage while maintaining reconstruction quality.

Key Research and Development Timeline

The progression from static 3DGS to temporal reconstruction has moved remarkably fast — less than three years from foundational paper to commercial tooling.

2023: The Foundation

The original “3D Gaussian Splatting for Real-Time Radiance Field Rendering” paper by Kerbl et al. (INRIA/Max Planck) was presented at SIGGRAPH 2023. Within months, researchers began experimenting with temporal extensions. Early 4DGS work from groups at Zhejiang University and Shanghai AI Lab demonstrated that deformation fields could be applied to Gaussian primitives with minimal quality loss.

2024: Rapid Maturation

Multiple research groups published competing approaches:

- Disentangled 4DGS (2024) separated time-dependent properties from static Gaussian parameters, enabling more efficient processing of complex dynamic scenes

- OMG4 (Optimized Minimal 4D Gaussian Splatting) introduced a three-stage pipeline — Gaussian Sampling, Pruning, and Merging — achieving over 60% model size reduction

- 4DGS-1K addressed temporal redundancy through a Spatial-Temporal Variation Score, dramatically reducing storage for long sequences

- 4DRGS from ShanghaiTech reconstructed 3D vessels from sparse-view dynamic DSA images, demonstrating medical applications

2025–2026: Commercial Crossover

The transition from research to production accelerated in 2025:

- Nuke 17.0 (The Foundry, late 2025) shipped with native Gaussian Splatting support for VFX compositing

- Volinga partnered with XGRIDS for dynamic volumetric content capture and real-time GS rendering

- 150-camera arrays by Xangle demonstrated production-quality 4DGS capture for commercial advertising

- NVIDIA’s Play4D (SIGGRAPH 2025) showed accelerated free-viewpoint video streaming for VR and light field displays

- Lumina-4DGS achieved 31.12 dB PSNR on the Waymo Open Dataset with robust handling of illumination inconsistencies from automotive multi-camera rigs

How 4DGS Differs from Static 3DGS

For professionals already familiar with static Gaussian Splatting — the kind processed by tools like DJI Terra, Polycam, Luma AI, or Nerfstudio — the key differences are:

| Aspect | Static 3DGS | 4D Gaussian Splatting |

|---|---|---|

| Input | Multi-view photographs (single moment) | Multi-view synchronized video |

| Output | Frozen 3D scene | Time-varying 3D sequence |

| Capture | Single drone flight or scan pass | Synchronized multi-camera array or sequential video |

| Processing | Minutes to hours (GPU) | Hours to days (significant GPU compute) |

| File size | 50MB–2GB per scene | 500MB–50GB+ per sequence |

| Rendering | 100+ FPS | 30–100+ FPS depending on method |

| Commercial tools | DJI Terra, Polycam, PostShot, Luma AI | Nerfstudio (research), Volinga (commercial), Nuke 17+ |

| Primary use | Architecture, construction, real estate, heritage | Film VFX, sports replay, autonomous driving, medical |

The capture requirements are fundamentally different. Static 3DGS works with the same drone imagery or handheld photos used for photogrammetry — a single flight over a building produces hundreds of overlapping images that feed into DJI Terra or similar tools. 4DGS requires synchronized multi-view video — multiple cameras capturing the same dynamic scene from different angles simultaneously.

Commercial Applications

Film and Virtual Production

The film industry is among the earliest commercial adopters of 4DGS. Virtual production stages with LED volume walls already use static GS environments (rendered at 100+ FPS in Unreal Engine). 4DGS extends this to dynamic environment elements — crowds walking through a street scene, vehicles in motion, water features, weather effects — that respond naturally to camera movement during filming.

The Xangle 150-camera array demonstrated in 2025 that production-quality 4DGS capture is commercially viable for advertising and film inserts. A single capture session produces a volumetric performance that can be re-lit, re-framed, and composited from any angle — eliminating reshoots for many scenarios.

For professional scanning services, this means virtual production location scouts can evolve from static environment captures to dynamic scene documentation that includes ambient motion and activity.

Sports Replay and Broadcasting

Free-viewpoint replay — the ability to freeze a sporting moment and rotate around it — has been a broadcast technology goal for over a decade. Traditional approaches using mesh reconstruction from multi-camera arrays (like Intel True View, now discontinued) were computationally expensive and visually imperfect. 4DGS offers photorealistic quality at fraction of the processing cost.

NVIDIA’s Play4D research demonstrated accelerated streaming of 4DGS captures suitable for real-time broadcast integration, including VR and light field display output. This technology enables broadcasters to offer viewers interactive replay from any angle, with photorealistic quality that mesh-based approaches could not achieve.

Autonomous Vehicle Development

Self-driving systems require vast amounts of training data showing diverse traffic scenarios. Rather than driving millions of test miles, AV companies are increasingly using 3D scene reconstruction to generate synthetic training data. Lumina-4DGS specifically addresses the autonomous driving use case, handling photometric inconsistencies from vehicle-mounted cameras with independent exposure settings — achieving 31.12 dB PSNR with 1.89m depth RMSE on the Waymo Open Dataset.

4DGS enables AV developers to reconstruct real-world driving scenarios from multi-camera recordings, then re-render them from novel viewpoints and with modified conditions (different lighting, added vehicles, changed traffic patterns) for perception system training.

Medical Imaging and Surgical Training

4DRGS from ShanghaiTech demonstrated that 4D Gaussian Splatting can reconstruct 3D vascular structures from sparse-view dynamic digital subtraction angiography (DSA) images. This achieves reconstruction in minutes rather than hours, with reduced radiation exposure compared to traditional 3D DSA protocols.

The medical application extends to surgical training — 4DGS captures of procedures enable trainees to review operations from any viewpoint, with temporal controls to pause, rewind, and examine specific moments in detail.

Current Limitations

4DGS is a rapidly advancing technology, but several practical limitations remain for professional deployment:

Data requirements are substantial. 4DGS capture requires synchronized multi-view video — typically 12–150+ cameras recording simultaneously at matched frame rates and exposures. This is fundamentally different from the single-camera or single-drone workflows used for static GS. Professional capture rigs are expensive ($50,000–$500,000+ depending on camera count and synchronization requirements).

Processing time scales with complexity. While static GS can process hundreds of images in minutes, 4DGS training on a multi-view video sequence can take hours to days on high-end GPU hardware (RTX 4090 or A100 recommended). Methods like OMG4 reduce model sizes by 60%+, but the initial training remains compute-intensive.

Temporal artifacts persist. Fast motion, occlusion-disocclusion events, and topological changes (objects appearing or disappearing) remain challenging. Deformation field approaches struggle with non-rigid deformation of complex geometry, and explicit 4D methods consume significant memory for long sequences.

Standardization is nascent. While static GS is converging on standards — the Khronos glTF KHR_gaussian_splatting extension, OpenUSD schemas, Cesium 3DTiles support — there is no equivalent standard for 4DGS sequences. File formats vary between research implementations, and no production pipeline has emerged as dominant.

Accuracy is visualization-grade, not survey-grade. Like static GS (mean geometric error of 7.82cm), 4DGS prioritizes visual fidelity over dimensional accuracy. For applications requiring engineering-precision measurements of dynamic processes, 4DGS alone is insufficient — though hybrid approaches combining 4DGS visualization with LiDAR measurement are an active research area.

4DGS vs. Alternative Dynamic 3D Methods

4DGS vs. Volumetric Video

Traditional volumetric video (Microsoft Mixed Reality Capture, 8i, Arcturus) uses multi-camera arrays to reconstruct mesh-based 3D video. 4DGS offers higher visual quality (photorealistic vs. mesh artifacts), smaller file sizes (Gaussian representation is more compact than per-frame meshes), and faster rendering. However, volumetric video has more mature capture infrastructure and established production pipelines.

4DGS vs. Dynamic NeRF

Neural Radiance Fields preceded Gaussian Splatting, and dynamic NeRF variants (D-NeRF, HyperNeRF, Nerfies) can also reconstruct temporal scenes. 4DGS advantages: 10–100× faster rendering (explicit rasterization vs. ray marching), easier editing and manipulation, and more predictable quality. NeRF advantages: potentially better handling of complex view-dependent effects and more established research tooling via Nerfstudio.

4DGS vs. Multi-View Stereo Video

Classical multi-view stereo computes depth maps per frame and fuses them into per-frame 3D reconstructions. This approach is well-understood but produces noisy geometry and lacks temporal consistency. 4DGS produces smoother, more visually coherent results by jointly optimizing across all frames and viewpoints.

What 4DGS Means for Professional Scanning Services

For companies providing professional Gaussian Splatting services like THE FUTURE 3D, 4DGS represents an emerging opportunity rather than an immediate service offering. The current state of the technology creates a clear division:

Today’s commercial GS services focus on static scene capture using proven tools like DJI Terra for aerial processing, SuperSplat for scene editing, and SplatForge for Blender integration. These workflows use existing drone and scanner hardware — DJI M4E with Zenmuse P1 for aerial capture, Trimble X12 (±2mm accuracy) for survey-grade reference, and handheld options from the broader ecosystem.

Tomorrow’s 4DGS services will require additional capture infrastructure (multi-camera synchronization rigs), specialized processing pipelines, and new delivery workflows. The film and virtual production vertical is the most likely early adopter for professional 4DGS services, given the existing demand for dynamic environment content and the budget scale ($10,000–$50,000+ per project) that justifies infrastructure investment.

Professional scanning companies that build expertise in static GS today — understanding scene optimization, artifact removal, multi-format delivery, and hybrid GS+LiDAR workflows — are best positioned to expand into 4DGS services as the technology matures and client demand emerges.

Use THE FUTURE 3D’s GS Method Selector to determine which approach fits your project, or explore the GS Cost Estimator for professional service pricing.

Frequently Asked Questions

What is the difference between 3D and 4D Gaussian Splatting?

3D Gaussian Splatting reconstructs a static scene from multi-view photographs — the output is a frozen 3D moment rendered in real time. 4D Gaussian Splatting adds a temporal dimension, reconstructing dynamic scenes from multi-view video. The output is a time-varying 3D sequence where objects move, deform, appear, and disappear naturally. 3DGS uses standard photographs as input; 4DGS requires synchronized multi-camera video.

Can I create 4D Gaussian Splats with a phone or consumer camera?

Currently, no. 4DGS requires synchronized multi-view video from calibrated camera arrays (typically 12–150+ cameras). Consumer apps like Polycam and Luma AI produce static 3DGS from single-camera input. Some research projects have explored monocular 4DGS from single video, but quality is significantly lower than multi-view approaches and these are not yet available in consumer tools.

How much does a 4DGS capture cost?

Professional 4DGS capture requires specialized multi-camera rigs ranging from $50,000 to $500,000+ in equipment. Session costs for commercial 4DGS capture (such as the Xangle 150-camera array for advertising) typically start at $10,000–$25,000 per capture session. Processing adds GPU compute costs. By comparison, static professional GS services start at $2,250 using existing drone and scanner hardware.

Is 4D Gaussian Splatting accurate enough for engineering measurement?

No. Like static GS (mean geometric error of 7.82cm), 4DGS prioritizes visual fidelity over geometric precision. For dynamic scenes requiring engineering-grade measurement, a hybrid approach combining 4DGS visualization with survey-grade LiDAR or photogrammetry is recommended. See our Gaussian Splatting accuracy guide for detailed accuracy benchmarks.

What software supports 4D Gaussian Splatting?

As of early 2026, 4DGS tooling is primarily research-grade: Nerfstudio supports several 4DGS methods, and Volinga offers commercial 4DGS processing. The Foundry’s Nuke 17.0 added native GS support for VFX compositing. DJI Terra, PostShot, and other static GS tools do not yet support 4DGS. Standard file formats for 4DGS sequences have not been established.

When will 4DGS be available for mainstream professional use?

Based on the current development trajectory — commercial demos in 2025, tool integration beginning in late 2025, and standards discussions underway — mainstream professional 4DGS services are likely 2–3 years from broad availability. The film and virtual production industry will likely be the first commercial vertical, followed by sports broadcasting, autonomous vehicle development, and eventually architecture and construction.

Does THE FUTURE 3D offer 4DGS services?

THE FUTURE 3D currently offers professional static Gaussian Splatting services using DJI Terra, SuperSplat, SplatForge, and hybrid GS+LiDAR workflows. We are actively monitoring 4DGS developments and evaluating infrastructure requirements for future service offerings. For static GS projects — building documentation, virtual production environments, construction visualization — contact us for a quote.

Can 4DGS be used for object scanning?

4DGS can reconstruct dynamic objects (a person moving, a machine operating), but THE FUTURE 3D does not scan individual objects, products, props, or vehicles. Our services focus on buildings, environments, and sites. For object-scale dynamic capture, research tools like Nerfstudio or commercial volumetric capture studios are appropriate alternatives.

Ready to Start Your Project?

Get a free quote and consultation from our 3D scanning experts.

Get Your Free Quote